How to Reduce Flaky Tests in Automation Frameworks

Flaky tests are architecture problems that compound quietly, and the way most teams respond to them makes things worse.

They emerge from a small set of recurring conditions, like shared state between parallel test runs, timing dependencies in async operations, environment instability, and test data that is not isolated between executions. When these pile up, your team starts making a quiet trade, such as retry instead of investigate, quarantine instead of fix.

Google, Spotify, Atlassian, and Daimler Truck AG have all documented this exact trajectory. So has almost every scaling SaaS team we have worked with that treated flaky tests as individual problems rather than architectural issues.

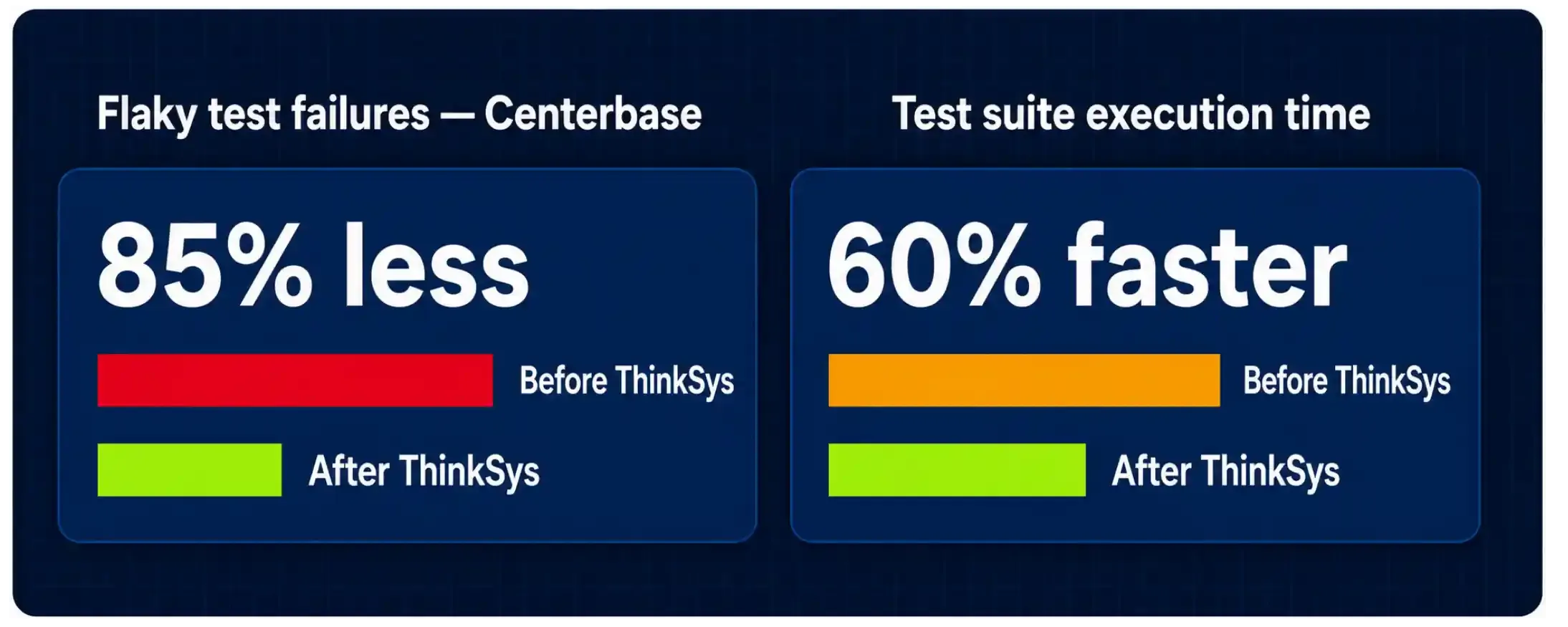

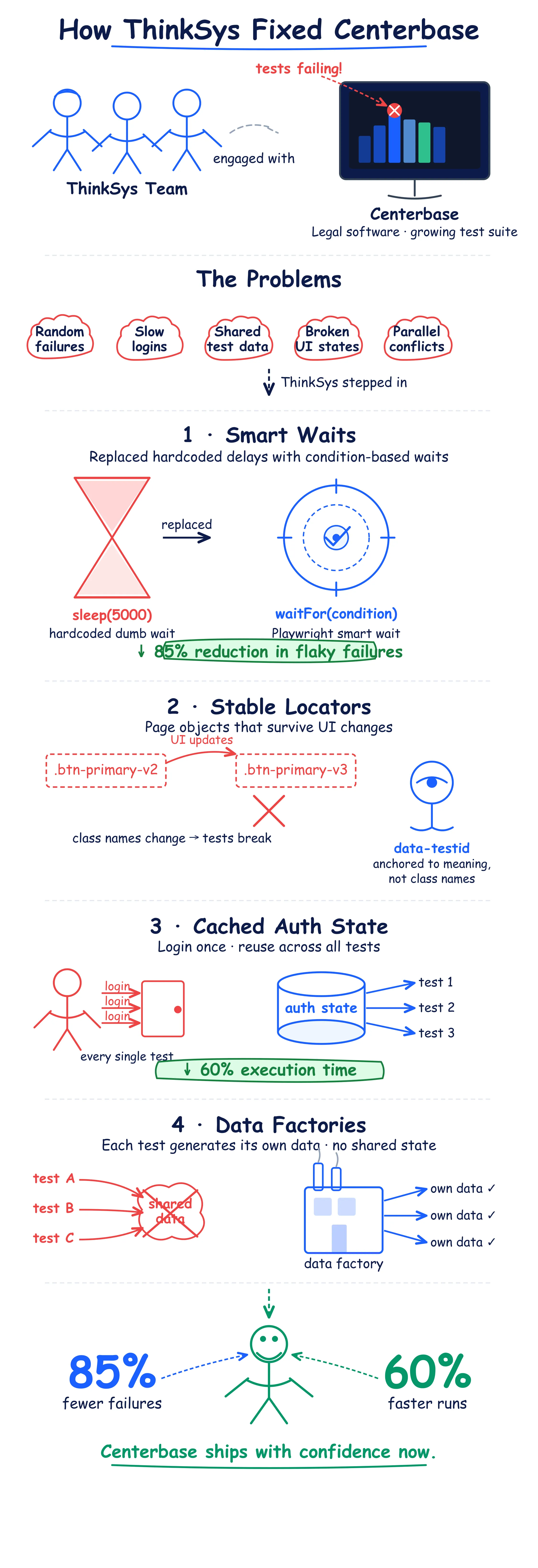

Centerbase, a legal practice management software provider, came to us after facing this pattern, false positives, constant manual maintenance, and a suite that the team had stopped trusting. We reduced their flaky test failures by 85% and cut execution time by 60%.

In this article, we’ll share how to figure out if you are in the same situation, how bad it actually is, and how to fix it step-by-step.

Three Questions to Find Out If You Actually Have a Problem

Before any solution, you need an honest read on your current exposure. Most teams are watching the wrong metric and drawing the wrong conclusion from it.

Answer these three questions using your actual CI data from the last 60 days.

- Question 1: How many distinct tests have failed flakily at least once in the last 60 days? Not how many times tests failed (how many unique tests).

- Question 2: Of the tests currently in your quarantine folder, how many have a named owner and a documented fix-by date?

- Question 3: Has your team ever correlated flaky failure timestamps across your suite to identify which tests fail together, before beginning any individual investigation?

You’ll See Results Like These

| Signal | Healthy | Warning | Critical |

| Unique flaky tests in 60 days | < 2% of the suite | 2–5% of the suite | > 5% of the suite |

| Quarantined tests with the named owner | All of them | Some of them | None or few |

| Failure timestamp correlation done | Yes, regularly | Once or never | Never attempted |

| Per-execution flaky rate | < 1% | 1–3% | > 3% |

| Team reaction when CI fails | Investigates root cause | Re-runs and moves on | Doesn't notice anymore |

If two or more rows in your table land in Warning or Critical, your CI signal is already degraded in ways your dashboard is not showing you. The rest of this article is about what architectural changes you need to make to resolve this.

Read Also: Top QA mistakes to avoid

Fix 1: Stop Tracking the Wrong Number

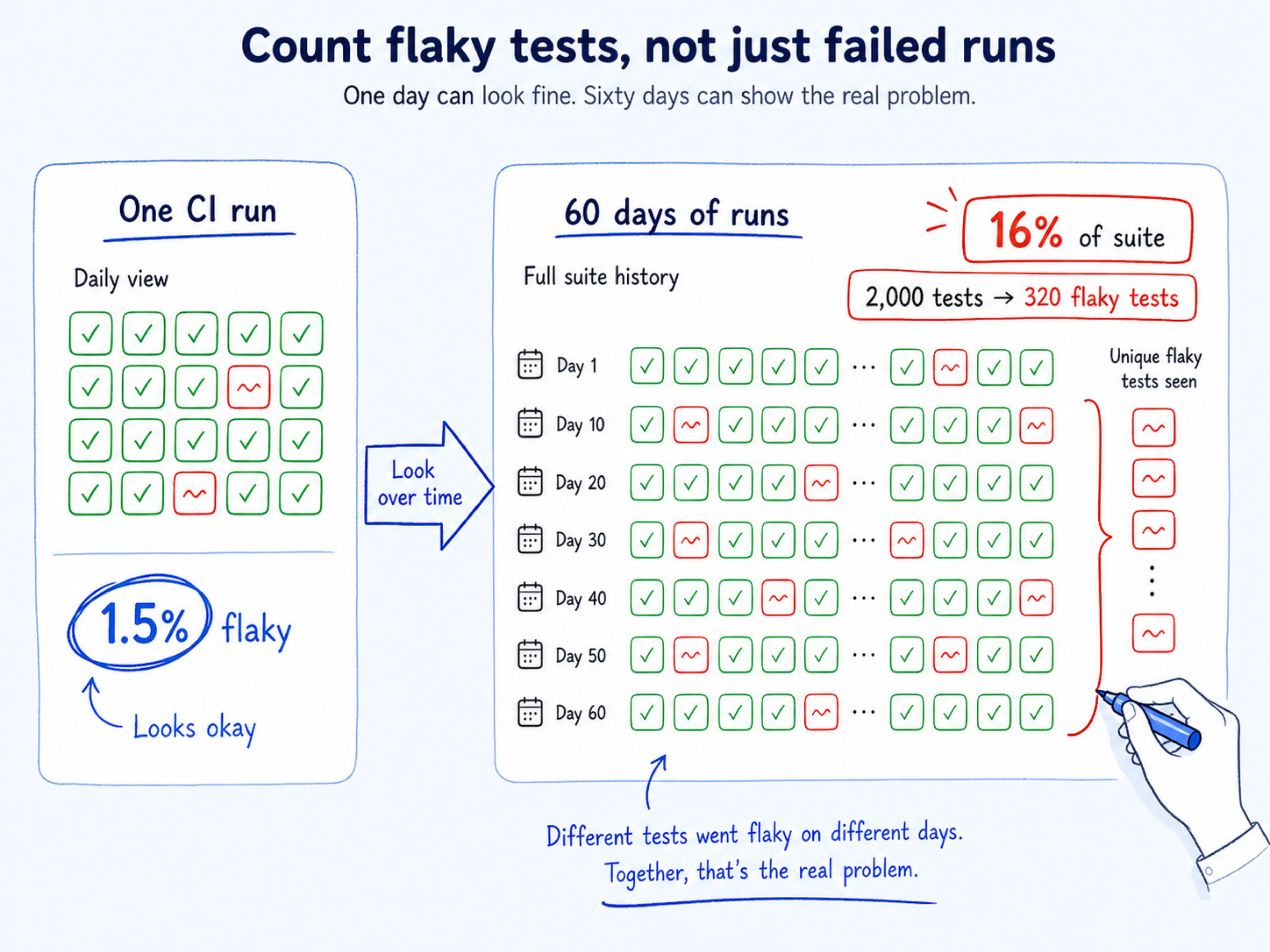

Your monitoring dashboard probably shows a per-execution flaky rate, the percentage of individual runs that fail flakily on a given day.

A 2% rate feels manageable, especially if it has been stable for months.

Stability is the problem. It creates a false sense of control while the real damage accumulates elsewhere.

John Micco, Engineering Productivity Researcher at Google, put a precise number on this gap: a 1.5% per-execution flaky rate affects 16% of total test inventory over time. Against a 2,000-test suite, that is roughly 320 distinct tests behaving non-deterministically over a quarter, while your dashboard showed a tidy, manageable number every single day.

The metric you are watching tells you how many runs failed today. What you actually need to know is how much of your suite can no longer be trusted.

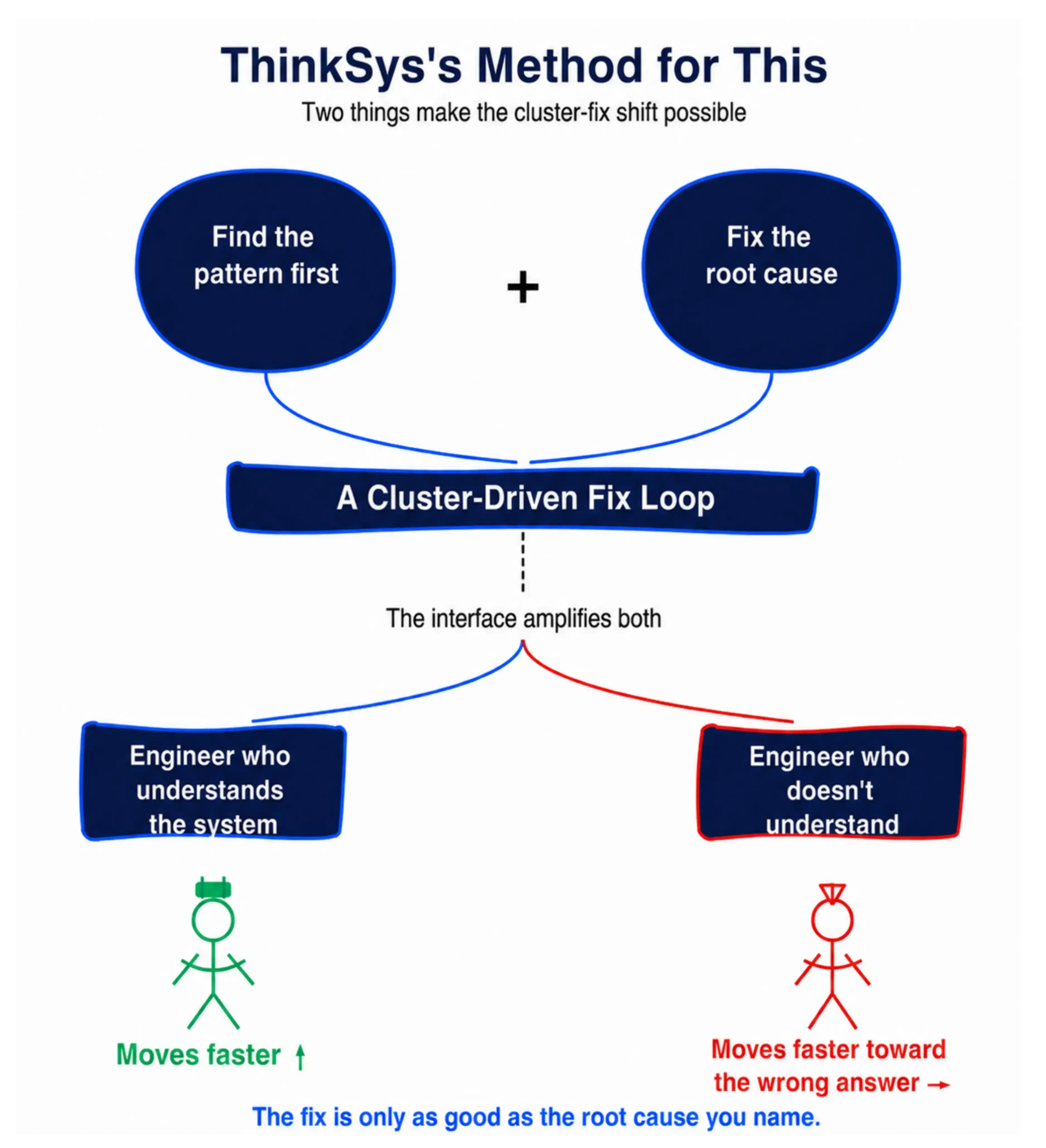

How We Approach This at ThinkSys

- Switch your primary monitoring metric: Pull your CI logs for the last 60 days and count unique tests that have failed flakily at least once; that is your real exposure number, not the per-run rate.

- Set a cumulative threshold: If more than 5% of your test inventory has shown flaky behavior in the last quarter, treat it as a critical signal.

- Build a trend dashboard: This will help you track unique-test counts week over week. A rising count of affected tests is an early warning that your current setup will never surface on its own.

- Report both numbers in sprint reviews: List down per-execution rate alongside cumulative inventory impact. The gap between them is what tells you whether your situation is stable or quietly getting worse.

Fix 2: Treat Quarantine as a Coverage Ledger

Open your quarantine folder right now. Find the oldest test in it. Check when it was quarantined, then check whether a single comment, commit, or ticket explains why it was moved there or when it is coming back.

For most teams, the honest answer is: it was flagged, it was moved, and that was the last anyone thought about it. The test is still counted in your inventory. The coverage it was supposed to provide is quietly gone.

Martin Fowler named this failure mode directly, quarantined tests stop helping with regression coverage, and without accountability, the quarantine folder becomes a one-way door. Atlassian built its Flakinator system precisely because it knew engineers would not follow up on quarantined tests voluntarily.

Their fix was automatic routing to a named owner, a ticket, and a deadline. The design assumption was explicit: good intentions are not enough.

Every test behind that door is a regression your suite will no longer catch. Your coverage percentage looks the same. Your actual coverage does not.

What ThinkSys Does Differently Here

- Audit your quarantine list this sprint: We assign a named owner and a fix-by date to every test in it. Any test that cannot get an owner this sprint gets removed from inventory, and the coverage gap gets documented explicitly, not silently absorbed.

- Put a quarantine SLA in place: No test stays quarantined longer than two sprints without a documented root cause and an active ticket attached to it.

- Make the count visible in your pipeline: Quarantined test totals should appear in every CI summary. Rising counts trigger a team-level review, not just a quiet individual follow-up.

- Build a re-entry gate: A test coming out of quarantine needs a documented fix and at least 10 consecutive clean runs before it is restored to the active suite.

Fix 3: Debug Root Causes, Not Individual Tests

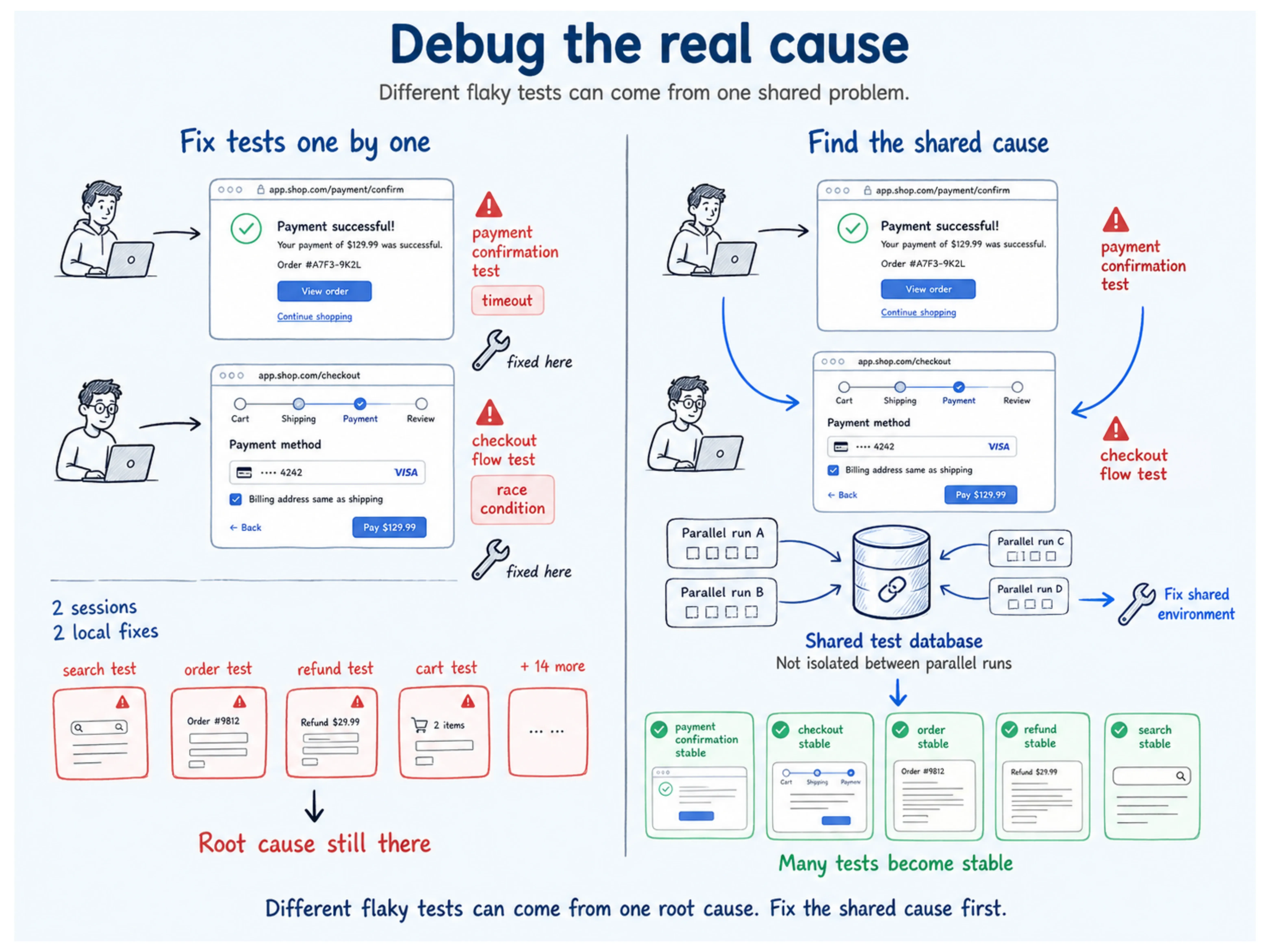

This is where your team is probably spending the most engineering time for the least return.

Understand it with an example: an engineer spends three hours chasing a flaky timeout in the payment confirmation test and fixes a hardcoded wait. The next week, a different engineer spends two hours on a race condition in the checkout flow. Different tests, different people, different days, but both failures trace to the same shared resource: a test environment database that is not properly isolated between parallel runs.

Two engineering sessions. One root cause. Fourteen more tests in quarantine with the same underlying condition, still waiting.

Owain Parry and colleagues at the University of Sheffield, Allegheny College, and Carnegie Mellon University documented this in a 2025 study: 75% of flaky tests fail in correlated clusters, with a mean cluster size of 13.5 tests. Failures are not random; they cluster because they share root causes. Fix the shared condition, and you resolve 13.5 tests at once.

The economy compounds fast. Leinen and colleagues at Daimler Truck AG measured the cost directly: manually investigating a flaky failure costs $5.67 in developer time. An automatic retry costs $0.02, but resolves nothing. It just makes the failure disappear until the next run. A team of 50 engineers seeing 50 flaky failures per day and choosing retry over investigation is spending over $100,000 a year to avoid a problem that gets worse every sprint.

- Before your team touches a single test, run timestamp correlation: Pick your last 30 flaky failures. Group the tests that fail within the same CI window; those groups are your clusters, and each one likely shares a single root cause.

- Name the condition: The same three to five conditions appear repeatedly across most suites: non-isolated environment state, shared test data, timing dependencies in async operations. Categorize them all by cause.

- Fix the condition: A shared database configuration causing 14 failures is one infrastructure fix, not 14 individual test repairs.

- Log what you fix and why: When a root cause is resolved, document which tests it affected and what changed. That pattern library becomes your fastest diagnostic tool the next time a cluster appears.

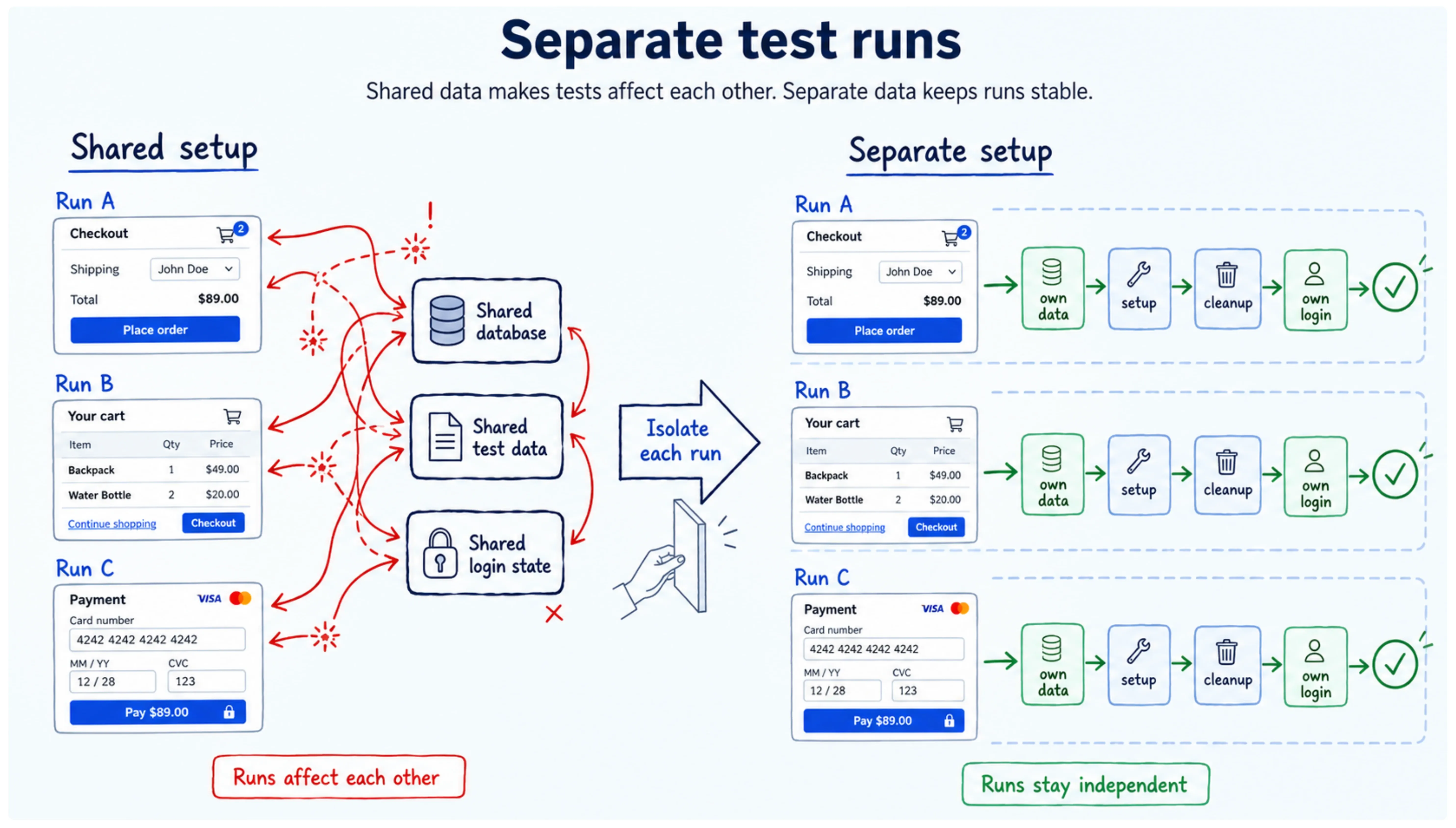

Fix 4: Build Isolation Into the Architecture

Most flaky test clusters trace to one underlying design failure: tests that share state. Shared databases, shared test data, shared environment configuration, any of these creates conditions where the order of execution, or the speed of a parallel run, determines whether your tests pass or fail. Non-determinism is a design gap that no amount of individual test fixing will close.

How ThinkSys Handled This for Centerbase, and How We Apply It More Broadly

For Centerbase, isolation was the core of the engagement. Here is what we implemented, and what we put in place across engagements at this stage of suite growth:

- Intelligent wait mechanisms. We replace hardcoded waits with smart, condition-based waits built into the framework itself. For Centerbase, this single change, integrated into a custom Playwright framework, reduced flaky test failures by 85%.

- Robust locator strategies: Reusable page objects and locators that hold up against minor UI changes. Tests that break every time a class name shifts are not flaky; they are fragile by design, and your team is paying the maintenance cost for that fragility every sprint.

- Conditional logic for optional UI states: Production-like environments surface UI states that controlled test environments suppress. We build conditional handling for those optional states directly into the framework, so your tests do not fail on variance that is actually expected behavior.

- Cached authentication state: Repeated login flows are a compounding source of both execution time and instability. Caching login state across test sessions eliminated 60% of Centerbase's test execution time and removed an entire category of timing-related failures.

- Data factories over shared fixtures: Each test run generates its own data and cleans it up on teardown. Shared fixtures create implicit dependencies between tests that produce failures no one can reliably reproduce or trace.

- Environment-specific setup and teardown: Every parallel test runner needs a fully isolated environment slice. Shared environment state is the single most common root cause of correlated failure clusters we see across suites.

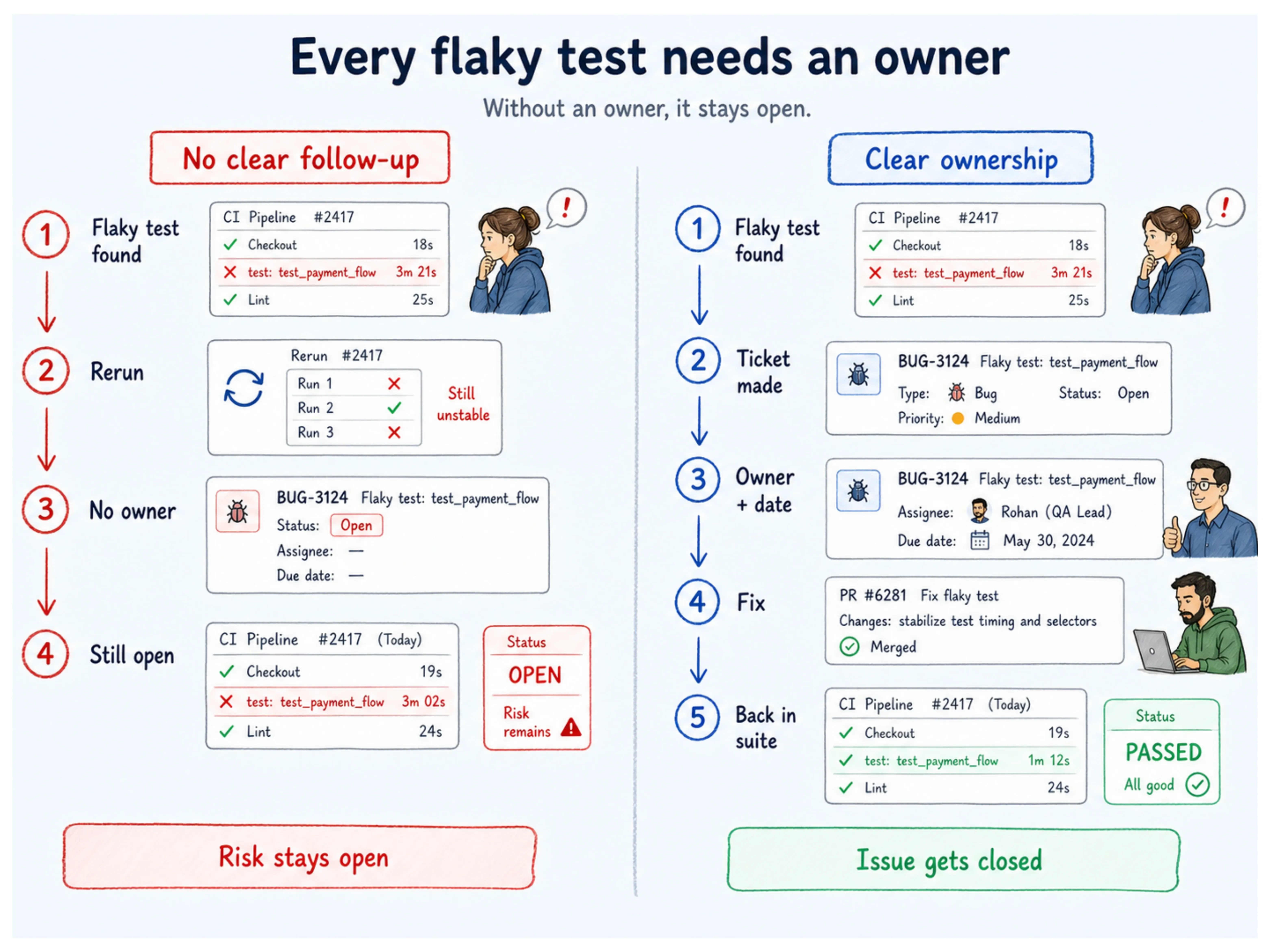

Fix 5: Create Ownership Infrastructure, Not Just Ownership Intent

Spotify cut its flakiness from 6% to 4% in two months by making flaky tests visible to the people who owned them. Before a single test was repaired, the accountability structure changed, and the rate dropped.

Jason Palmer says that without confidence in your test suite, you are in no better position than a team with zero tests. What your team needs is not more intent around ownership; it is infrastructure that makes ownership unavoidable.

Naming someone in a comment and moving on does not work. Forcing functions do: automatic tickets, fix-by dates with pipeline consequences, and escalation when deadlines pass without action.

The Ownership Model ThinkSys Puts in Place

- Every flaky test gets a named owner within 24 hours of identification: Not a team. A person. The distinction matters more than it sounds.

- Automatic ticketing on detection: When a test is flagged flaky, a ticket gets created automatically in your project management tool, pre-populated with the test name, failure timestamps, and a default fix-by date. Your team should not have to remember to create it.

- Pipeline consequences: A test flagged flaky with no active owner or ticket after one sprint gets removed from the suite automatically. The coverage gap is logged. The removal forces a real decision that social accountability quietly lets teams avoid.

- Flakiness metrics in engineering reviews: Unique flaky test count, quarantine count, and mean time to resolution belong in your sprint reviews alongside velocity and bug count. What gets measured in those rooms gets managed.

The Real Cost of Waiting

Leinen and colleagues at Daimler Truck AG found that flaky tests consume 2.5% of productive developer time, 1.1% investigating failures, 1.3% repairing tests. For a 100-person engineering team at $150K average loaded cost, that is $375,000 per year in direct productivity loss.

The Bitrise Mobile Insights Report, analyzing over 10 million builds between 2022 and 2025, found that the share of teams experiencing flakiness grew from 10% to 26% in that period, a 160% increase as CI pipelines became 23% more complex.

| Cost Category | Annual Impact (100-person team) |

| Developer time investigating failures | ~$165,000 |

| Developer time repairing flaky tests | ~$195,000 |

| Retry-only "resolution" (50 failures/day) | ~$100,000+ |

| Total direct productivity loss | ~$375,000 |

Every sprint you defer the architecture work, the suite falls further behind a product that keeps growing.

When to Bring In Outside Help

Here is the honest reality: the engineers who could fix your flaky test architecture are the same ones building your next release. That trade-off does not resolve on its own.

If any of this sounds familiar, it might be time to talk to someone who has done this before:

- The same tests have been sitting in quarantine for months with no owner and no fix date

- Your team re-runs failing tests more often than it investigates them

- Your roadmap leaves no room for QA infrastructure work this quarter

ThinkSys has worked through this with SaaS teams at exactly this stage. We know which patterns cause the most damage, which fixes hold up as suites scale, and how to run this work alongside your roadmap rather than instead of it.

If your team has stopped fully trusting its own CI results, that is worth a conversation. We will tell you honestly what we find and what it takes to fix it.

Share This Article: